We do website analysis and strategy reports for $850 and we’ve seen them offered elsewhere for $1000 or more, but you can also have it done automatically by robots online for free. How do the free services compare?

We ran our lab site, www.MyFreshPlans.com, through a bunch of them and here’s what we found out:

- SEOScores: SEOScores told us what our meta tags said, checked for the main keyword we identified on several inner pages, told us the number of links and indexed pages for Google and Yahoo, gave us our PageRank and Alexa rank, told us the domain age, and also gave us our code to text ratio. This is a lot of information, though the tool incorrectly told us we had no indexed pages and no blog. We got no score, actually, and not much assistance with deciding what any of the data meant.

- Website Grader: Website Grader is Hubspot’s tool, so it’s more stylish than most. It gave FreshPlans a grade of 96 out of 100, based on our blog rating, metadata, MOZRank, social media activity, domain info, and inlinks. Website Grader found 1,040 indexed pages and gave us much of the info SEOScores did, with some specific suggestions for improving our score. Website Grader has now been replaced by Marketing Grader.

- Site Report Card: This one gave us options for what we’d like it to check on, from spelling errors to broken links. We had it check everything and it gave us a 7.83 out of 10. On further study, it was clear that our rather low score was based on one item: we had 9/10 or 10/10 on most items, but only a 1/10 for load time. Like the others, this tool gave us our Alexa ranking, but it checked fewer items overall.

- WooRank: WooRank is cute and fun to use. It gave us a 52.5 out of 100. It included visitors (estimation was way off), indexed pages (it found only 508), on-site elements, and a number of interesting tech and social media items. WooRank docked us for having flash (videos, maybe?) and frames (possibly our single Amazon ad?). It also disliked our 9.11% text-to-code ratio and our lack of participation in Dublin Core.

- W3C Validation Service: This isn’t exactly a website grader, but after getting some warnings from various tools, we ran the site through the validator. Our Amazon affiliate links, over which we really have little control apart from the option not to use them, are riddled with errors. We have no other errors, and we’re not sure how much the errors in those links matter. You should check your site here, though, if your designer didn’t do it when it was built, because bad code can be harmful to your website’s performance.

- SEOMoz Trifecta: The Trifecta gave us a mere 4% based on PageRank, links, and Alexa and Compete ranks. We’re a little surprised about this, since our site has a MOZRank of 4 (out of 10, not 100), but there it is. There’s a page with suggestions for improvement, but they are actually all links to good articles about SEO and web design.

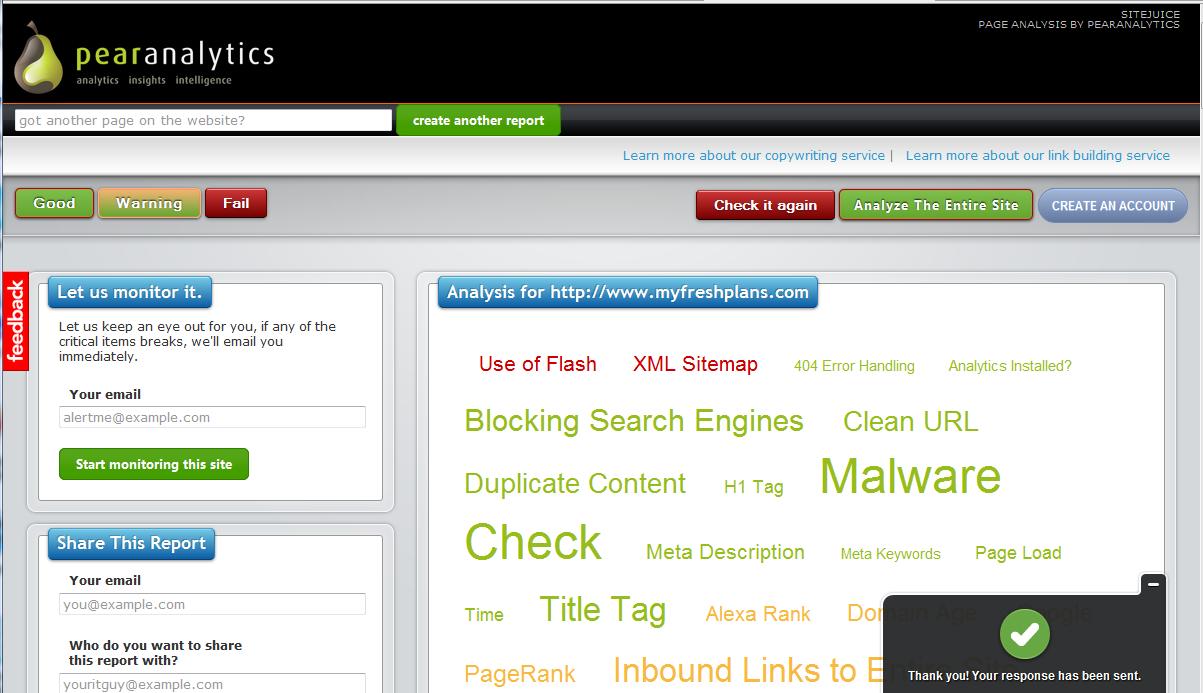

- PearAnalytics: Pear is fun to use and gives a bright and playful report. There’s a tag cloud with red, green, and yellow indicators. Pear deplored our lack of sitemap and use of Flash, admired our meta tags, code and content, and recommended that we work on our domain age, PageRank, and links. We didn’t get a score or grade.

So how valuable are these tools? They definitely provide some useful data. In fact, we always run sites through our favorite tool first thing to get those basics: domain age, links, etc. We can find them ourselves, but why take the time? Unfortunately, as we’ve seen from trying out a number of different tools, they don’t all return the same results, so someone has to be wrong. Our indexed pages, for example, were counted at several different numbers ranging from 0 to 1,040. Google says 1,190.

Apart from accuracy of data, we also found that the tools don’t agree with one another at all on the overall ranking of the site. Our scores ranged from 4 to 96 out of 100. It’s hard to feel much confidence under those circumstances. Part of this is disagreement about what’s important. There also a things a robot can’t tell — our Flash, for example, is being used appropriately. The graders can’t tell the difference between our educational swf object video and an all-Flash splash page.

If we were concerned about our web site and wanted to know what steps we ought to take, these tools wouldn’t have much to tell us. We should have a site map, they say, and an older domain. Perhaps if we had more issues, we’d be getting more advice, but a human being would be more useful when it comes to specific steps for us to take.

If you want to use an automatic tool, we’d suggest choosing one and using it every few months to see how you’ve improved based on its standards. Since they are inconsistent, using a number of them won’t show improvement over time.

Leave a Reply